The Fragmentation Problem

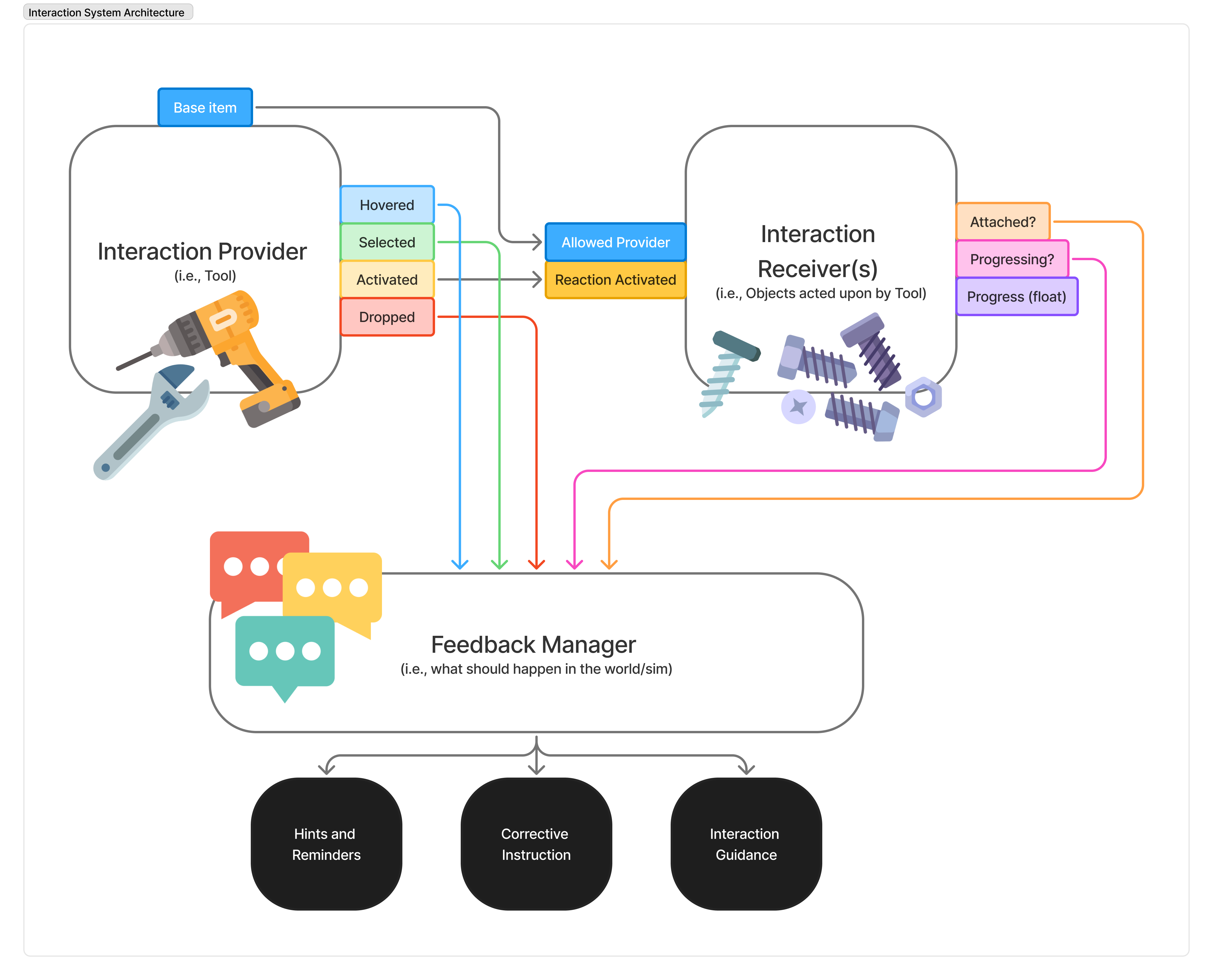

When scaling VR production, fragmented UI/UX patterns across dozens of simulations inevitably led to inconsistent design quality. Our biggest hurdle was a bloated production pipeline where technical artists and designers were forced to rebuild basic assets and interaction models entirely from scratch for every new training module. Recognizing this inefficiency, I proposed a comprehensive XR design system—a modular architecture for interaction design built directly on top of the proprietary Transfr SDK.

Aligning SDK Tools with Interaction Design

This proposal led to my direct involvement in the SDK's tools development. The goal was to bridge the gap between concept and code by ensuring the SDK natively supported the strict, consistent requirements of the new XR design system. By standardizing physical hand interactions, UI scaling, and spatial ergonomics, we created an ecosystem where building a complex interaction felt like snapping together building blocks rather than coding bespoke solutions.

Node Flow & Baked-in Logic

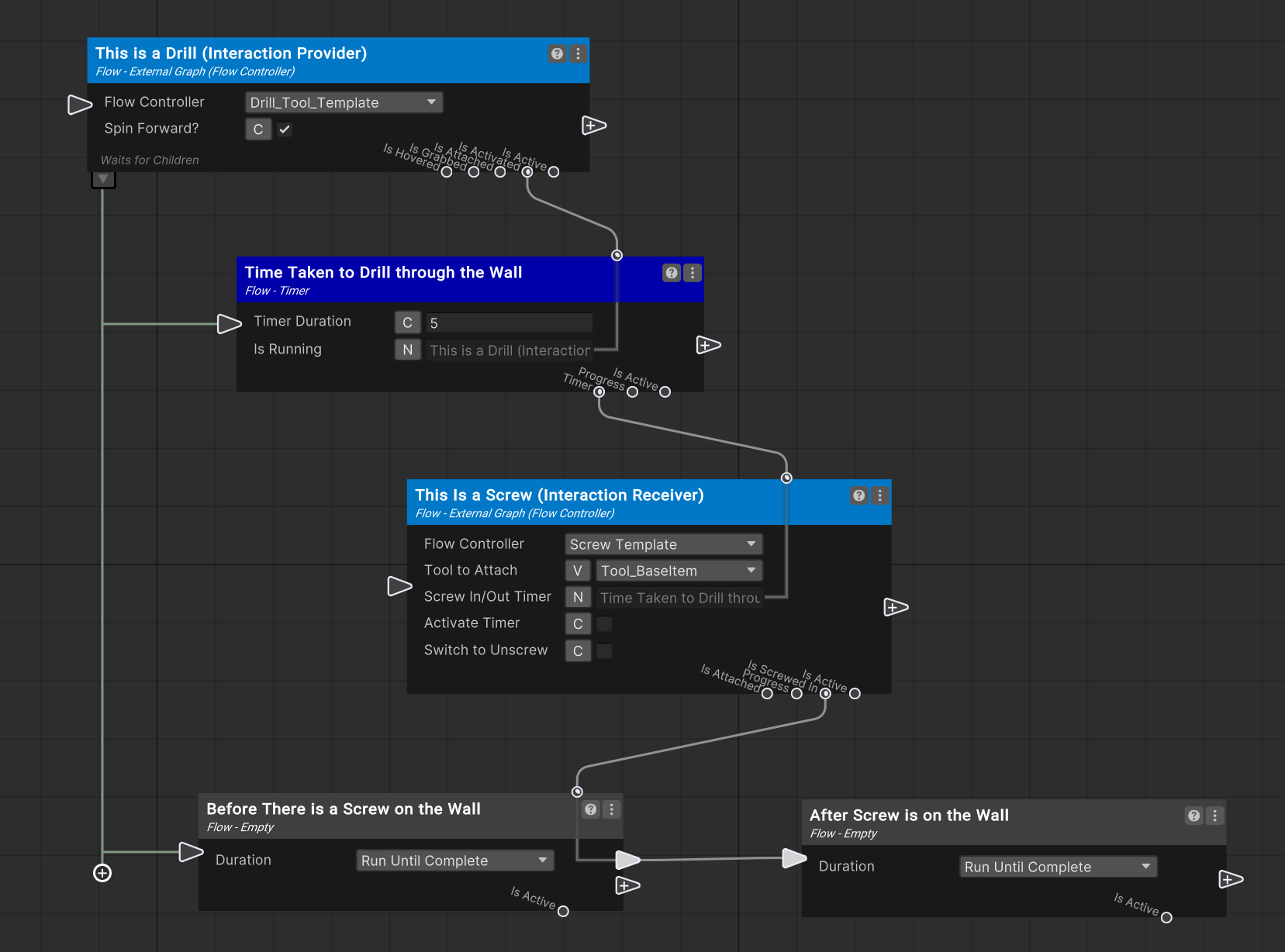

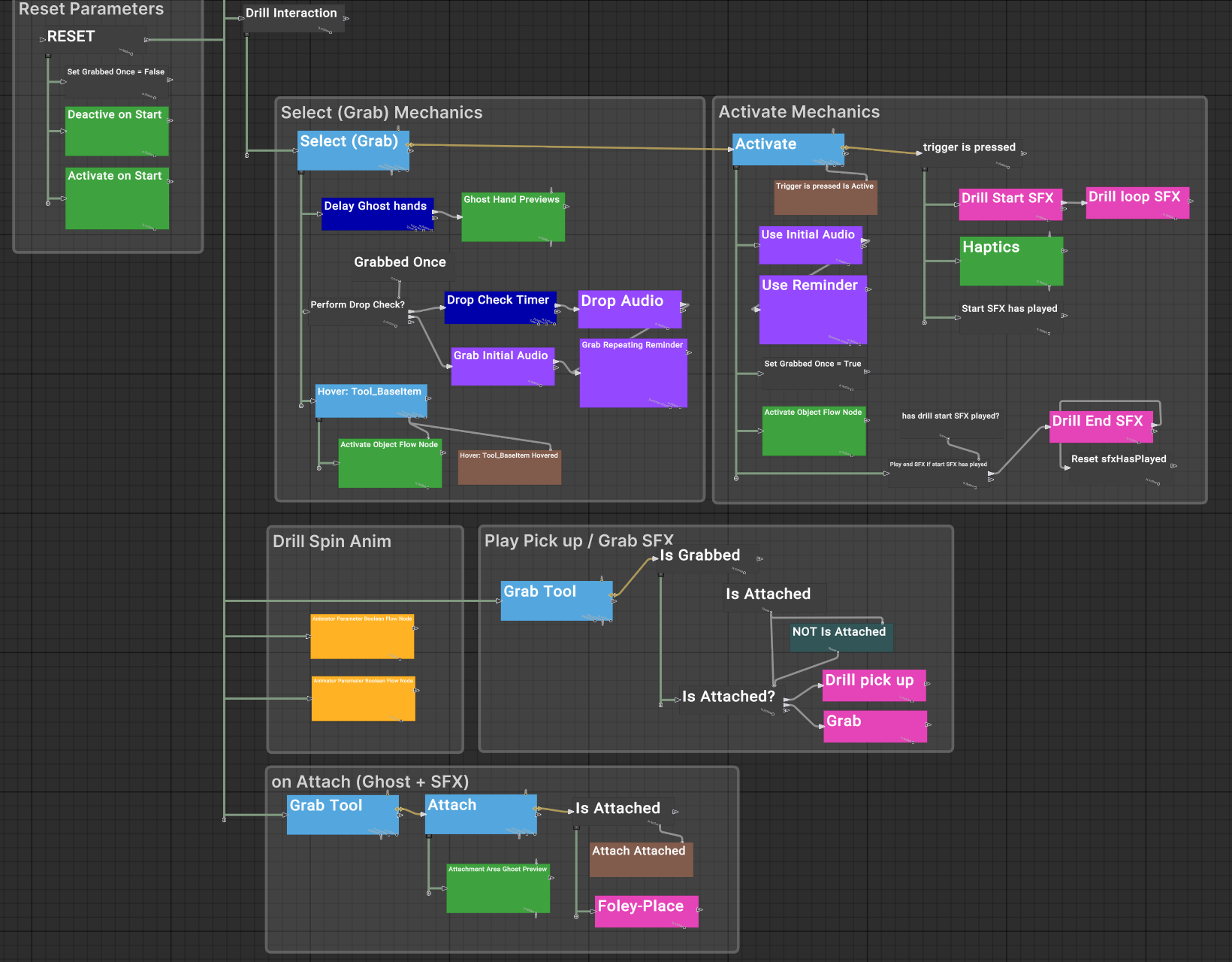

A major breakthrough in this architecture was my involvement with Node Flow—a visual, node-based authoring tool for VR simulation building. I helped engineer reusable, tool-based interaction templates for standard VR objects like drills, wrenches, spray cans, scissors, wire cutters, and more. Rather than just being static 3D models, these grabbable objects came pre-configured with baked-in interaction logic, spatial audio effects, haptic feedback profiles, and UX affordances.

Scaling Production Value

The result was transformational. With all haptics, SFXs and UX logic baked directly into a standardized template, these interactable assets could be instantly reused across an entire VR training module - often comprising 10-12 distinct simulations. This unified approach:

- saved massive amounts of redundant production time,

- drastically accelerated our delivery timelines, and

- established a consistently premium benchmark for design quality across the platform.