The Design Journey: From Empathy to Architecture

Inclusive design isn't just a technical challenge; it's a human one. My process was rooted in a deep, iterative cycle of research and prototyping.

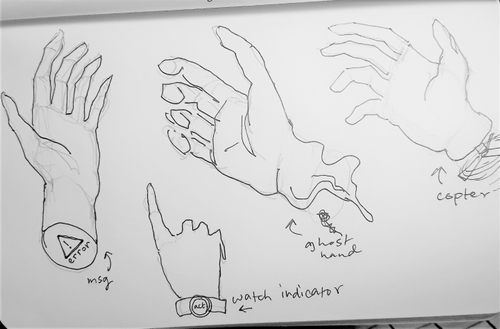

- Ideation & Sketching: I began with rapid sketches, exploring how we could "un-map" standard controller inputs and redistribute them across other human sensory channels.

- User Interviews: I conducted in-depth interviews with individuals in the disability community. These conversations moved beyond technical needs and into the emotional landscape of immersion - understanding the frustration of being "locked out" of a digital world due to rigid hardware requirements.

- User Profiling: I synthesized these insights into a specific user profile that spanned the spectrum of accessibility needs. This helped me move away from "edge-case" thinking and toward a "universal design" mindset.

- Low-Fidelity Prototyping: I built initial cardboard prototypes with laser pointers strapped to my head to act as the gaze pointer. Could a user effectively navigate a 3D space without the tactile feedback of a trigger or grip?

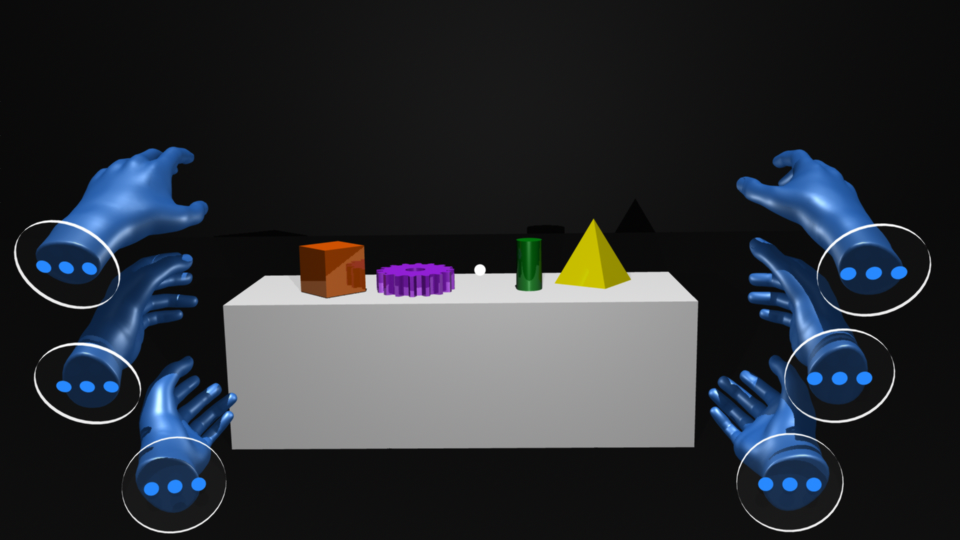

- The Multi-Modal Solution: The final prototype utilized a Voice and Gaze-based interaction model. By combining eye-tracking for selection and natural language processing for execution, I created a seamless "Free Hand" experience that offered 100% functionality without a single hand gesture.

Learnings: From Accessibility to "Superpowers"

In the process of solving the physical mobility problem, I stumbled upon a realization that changed my perspective on the future of XR. While I was focused on creating an alternative for those who couldn't use their hands, I discovered that this interaction schema actually functions as a digital superpower for everyone. When we stop viewing gaze and voice as "replacements" and start viewing them as "extensions," the possibilities explode. Imagine a VR environment where you use your physical hands for tactile work, while your gaze and voice act as a second or third set of "ghost hands" to manage menus or move distant objects simultaneously. By designing for the "limited" case, we inadvertently unlock new tiers of human performance for the general user. It raises a compelling question for the future of spatial computing: Why settle for two hands when the digital world allows us to have many?